Video capture hardware takes analog video input & turns it into digital image data

Video is a sequence of images

Full resolution is typically 640x480, 30 frames/second

Uses:

Video interaction can use advanced computer vision algorithms,

or simpler hacks

Can use special tools, or user's body

Interaction without tools usually either detects motion, or body silhouette

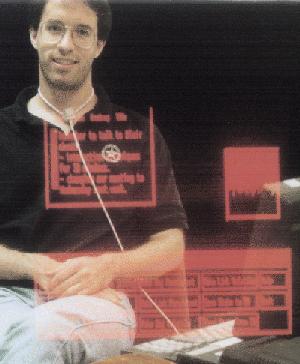

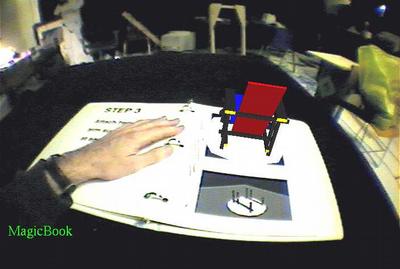

Interaction may be focused on real world, on on user's image in video display

![]()

![]()

![]()

|

| |

|

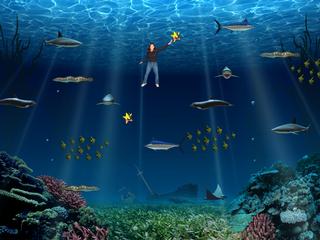

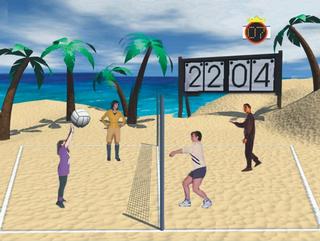

Mixed Reality Systems Laboratory (Aquagauntlet, etc)

|

|

|

|

|

|

Live video in Linux uses the v4l (Video4Linux) driver

Can use a video capture card, or USB webcam

Basic steps:

Commands are sent via ioctl()

Status is read via ioctl()

Video data area is set up via mmap()

Capturing can be double-buffered (or triple-buffered or more)

This improves speed and helps avoid blocking to wait for data

videoInput.h

videoInput.cxx - videoInput class for C++

videoInput-py.c - videoInput extension for Python (build as videoInput.so)

grab.py - test program - grabs a sequence of frames

Video capture data is array of BGR pixels

Can be draw in OpenGL using glDrawPixels

Image data runs from top to bottom - use glPixelZoom to flip

Probably want to disable depth buffer when drawing image

Example code:

Video data can be turned into a texture

Pass data buffer to glTexSubImage2D

For live video texture, redefine texture each frame, using same texture ID

Example code:

Texture sizes must be powers of 2 (e.g. 512 x 512)

Video data is typically something like 640 x 480 - not powers of 2

glTexSubImage2D allows one to load a portion of a texture

For video textures, create a larger texture (e.g. 1024 x 512), and sub-load the video data into it

glTexSubImage2D(GL_TEXTURE_2D, 0, xoffset, yoffset,

width, height, format, type, pixels)

Texture coordinates will have to use smaller range (not 0 ... 1) -

For a 640 pixel wide video in a 1024 pixel wide texture, S coordinate

ranges from 0 to 0.625

Texture transformation matrix can be used to adjust texture coordinates