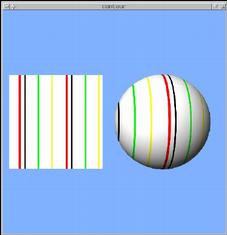

Textures provide color information, but so does glColor or lighting.

The texture environment controls how these colors interact.

glTexEnvf(GL_TEXTURE_ENV, GL_TEXTURE_ENV_MODE, mode)

A "GL_REPLACE" environment uses just the texture color, and ignores everything

else.

Color = Ct

A "GL_MODULATE" environment combines the texture and other color, multiplying

them together.

Color = Ct * Cf

Example: texenv.py

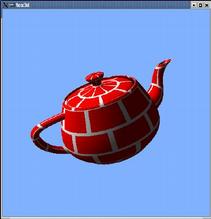

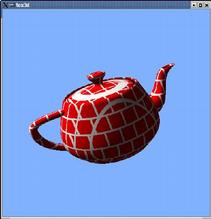

GL_MODULATE mode is used when objects are both textured and lighted

Example: texlight.py

Applying a texture involves sampling - for each pixel drawn on the screen, OpenGL must compute a color from the texture image.

Texels (texture pixels) rarely match up exactly 1-to-1 with the pixels on the screen .

Filtering modes select what to do when texels are larger than screen pixels (magnification), or when texels are smaller than screen pixels (minification).

glTexParameteri(GL_TEXTURE_2D, filter, mode)

If filter is GL_TEX_MAG_FILTER, mode can be

If filter is GL_TEX_MIN_FILTER, mode can be

Example: texfilter.py

Mipmapping uses versions of the texture at smaller & smaller sizes image, to effectively pre-compute the average of many texels.

As a texture gets more and more minified, many texels will correspond to a single screen pixel, and using a "nearest" or "linear" filter will not compute an accurate result. The texture will appear to flicker as the object moves.

A mipmapped texture avoids the flickering problem when minified. As a result, it can look a bit fuzzier.

gluBuild2DMipmaps(GL_TEXTURE_2D, GL_RGB,

width, height, GL_RGB,

GL_UNSIGNED_BYTE, pixels)

Use gluBuild2DMipmaps in place of glTexImage2D.

It will automatically generate all the smaller versions of the texture.

Example: mipmap.py

Texture image data can also be created in memory, instead of reading from a file.

The data must be an array of bytes. In Python, it's a string object.

String data can be constructed from other data using the struct module.

def createTextureString(rgbList):

s = ''

for rgb in rgbList:

s += struct.pack('BBB', rgb[0], rgb[1],

rgb[2])

return s

Very long sequences can exceed graphics card memory.

Very long sequences can exceed graphics card memory.

In that case, it's more efficient to create a single texture, and redefine it on the fly, than to use many separate textures.

Call glTexImage2D to re-load a texture image, any time the target texture is bound.

glTexSubImage2D allows one to load a portion of a texture.

For non-powers-of-two textures, create a larger texture (e.g. 1024 x 512), and sub-load the video data into it.

glTexSubImage2D(GL_TEXTURE_2D, 0, xoffset, yoffset,

width, height, format, type, pixels)

Texture coordinates will have to use smaller range (not 0 ... 1).

e.g. for a 640 pixel wide video in a 1024 pixel wide texture, S coordinate ranges from 0 to 0.625

This reduces the amount of memory needed.

Textures can also download faster.

glCompressedTexImage2DARB(GL_TEXTURE_2D, 0, format, xdim, ydim,

0, size, imageData)

Use 4 channel texture image

Can be used to "cut out" a texture image - creates complex shapes with simple geometry

| RGB | Alpha | ||

|---|---|---|---|

|

|

|

|

Transformations - translation, rotation, and scaling - can be applied to texture coordinates, similar to how they are applied to geometry. The exact same OpenGL function calls are used. The only difference is that the matrix mode is changed to GL_TEXTURE.

glMatrixMode(GL_TEXTURE)

glTranslatef(0.1, 0.05, 0)

glRotatef(30.0, 0, 0, 1)

glMatrixMode(GL_MODELVIEW)

Setting the matrix mode to GL_TEXTURE means that any subsequent transformation calls will be applied to texture coordinates, rather than vertex coordinates. |

|

|

One trick that we can use a texture transformation for is to make sure that a texture is always applied at the same scale, as an object is transformed.

glMatrixMode(GL_TEXTURE)

glLoadIdentity()

glScalef(size, 1, 1)

glMatrixMode(GL_MODELVIEW)

glScalef(size, 1, 1)

|

|

Use the function glTexGen, and glEnable modes GL_TEXTURE_GEN_S & GL_TEXTURE_GEN_T.

planeCoefficients = [ 1, 0, 0, 0 ] glTexGeni(GL_S, GL_TEXTURE_GEN_MODE, GL_OBJECT_LINEAR) glTexGenfv(GL_S, GL_OBJECT_PLANE, planeCoefficients) glEnable(GL_TEXTURE_GEN_S) glBegin(GL_QUADS) glVertex3f(-3.25, -1, 0) glVertex3f(-1.25, -1, 0) glVertex3f(-1.25, 1, 0) glVertex3f(-3.25, 1, 0) glEnd()

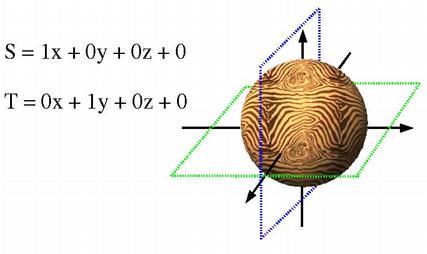

TexGen, in GL_OBJECT_LINEAR and GL_EYE_LINEAR modes, is based on plane equations.

The texture coordinate is, effectively, based on the distance of the vertex from a plane.

S = Ax + By + Cz + D

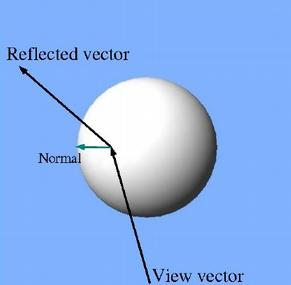

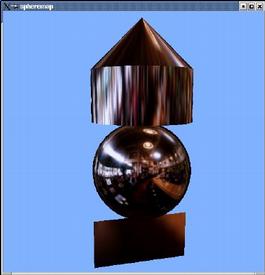

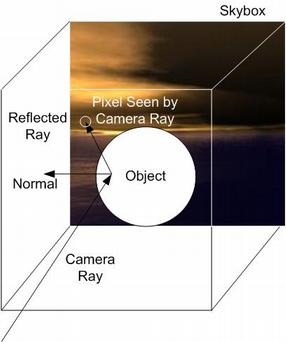

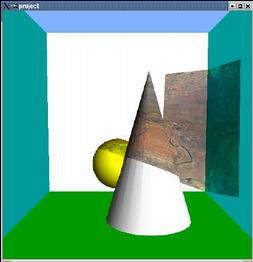

Sphere mapping, a.k.a. reflection mapping or environment mapping, is a texgen mode that simulates a reflective surface.

A sphere-mapped texture coordinate is computed by taking the vector from the viewpoint (the eye), and reflecting it about the surface's normal vector. The resulting direction is then used to look up a point in the texture. The texture image is assumed to be warped to provide a 360 degree view of the environment being reflected.

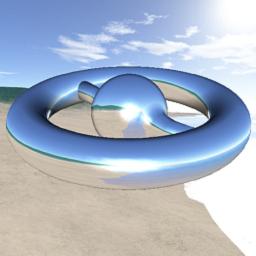

Cube mapping is a newer method of reflection mapping.

The reflection texture is considered to be 6 2D textures forming a cube that surrounds the scene.

This allows for more correct reflection behavior as the camera moves about.

Projected textures use 4 texture coordinates: S, T, R, & Q.

Projected textures use 4 texture coordinates: S, T, R, & Q.

Texture projection works similarly to a 3D rendering projection.

A rendering projection takes the (X, Y, Z, W) coordinates of vertices, and converts them into (X, Y) screen positions.

A projection loaded in the GL_TEXTURE matrix can take (S, T, R, Q) texture coordinates and transform them into different (S, T) texture coordinates.

Projecting a texture thus involves 2 major steps:

|

|

| 2D Texture | 3D Texture |

|---|

A 2D texture is like a photograph pasted on the surface of an object.

A 3D texture can make an object look as if it were carved out of a solid block of material.

A 3D texture can be thought of as a stack of 2D images, filling a 3D cube.

3D texturing adds an R texture coordinate to the existing S & T coordinates.

3D textures are used in scientific visualization for volume rendering.

A dense stack of quads are rendered, running through the volume.

Alpha transparency is used to see into the volume.

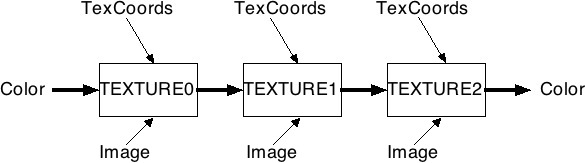

Multitexturing allows the use of multiple textures at one time.

An ordinary texture combines the base color of a polygon with color from the texture image. In multitexturing, this result of the first texturing can be combined with color from another texture.

|

| -> |  |

Multitexturing involves 2 or more "texture units".

Most current hardware has from 2 to 8 texture units.

Each unit has its own texture image and environment.

Each vertex has distinct texture coordinates for each texture unit.

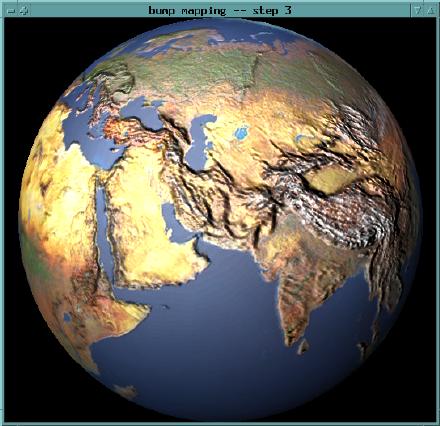

A technique to produce advanced lighting effects using textures, rather than (or in addition to) ordinary OpenGL lighting.

OpenGL lighting is only calculated at vertices. The results are interpolated

across a polygon's face.

For large polygons, this can yield visibly wrong

results.

Also, OpenGL lighting does not produce shadows.

A simple way to do this is to include shadows & lighting in the texture applied to an object.

Some modeling packages can do this automatically (called "baking" the shadows into the textures, in at least one case).

Texture is used to modify normals, which affect lighting, on a per-pixel basis

Yields the appearance of a bumpy surface, without large amounts of geometry